I recently developed a virtual reality audio toy for a class I’m taking at Columbia College with Austin McCasland as the instructor.

Synopsis

VRAudioLoopToy is prototype of a system which enables a user to play and exchange audio loop tracks in an immersive, virtual reality experience.

Inspiration

The muse for the project is a musical toy for children called the Mozart Magic Cube.

How it was Built

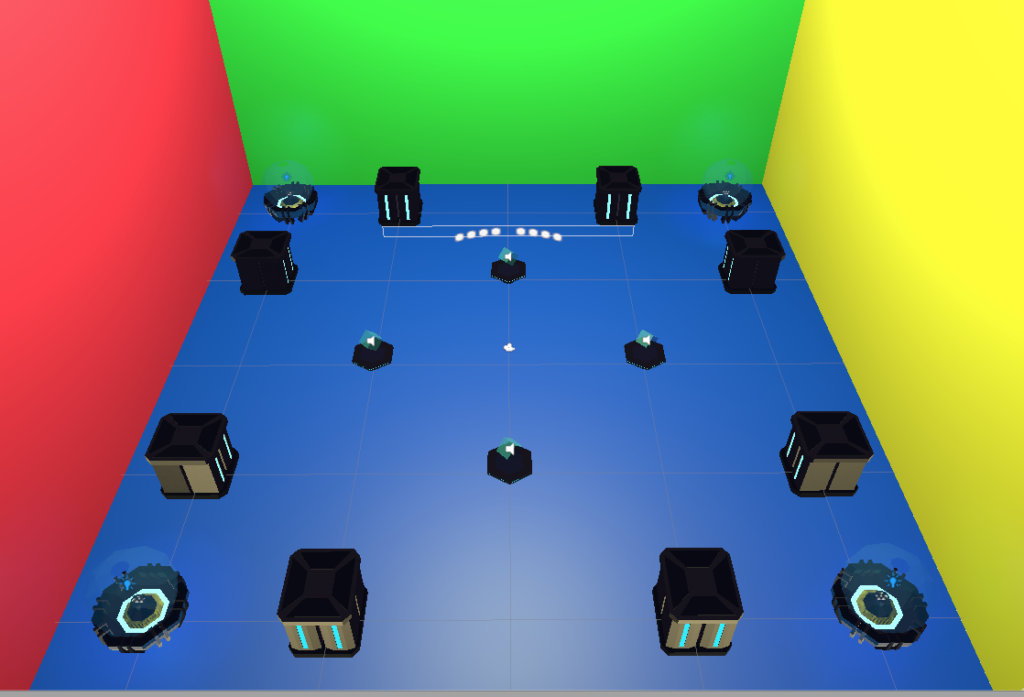

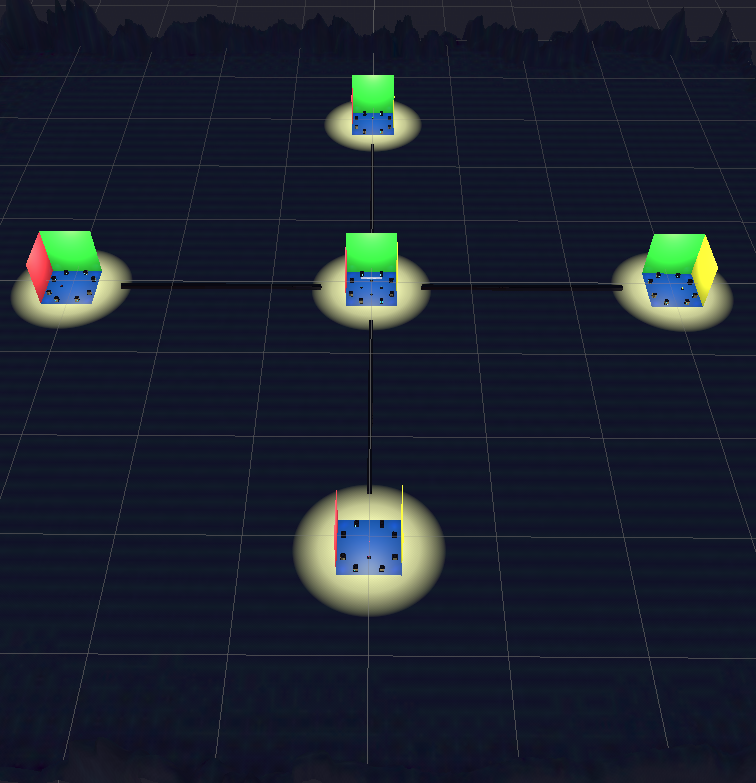

The key aspect of the Mozart Magic Cube is that you can listen to a piece of music by Mozart and mute or unmute any of the featured instruments by pressing on the corresponding button. For VRAudioLoopToy, I created a central node (room) which had four cubes representing an instrument class laid out on the cardinal directions:

North: Beat cube

South: Bass cube

East: Lead cube

West: Vibe cube

I wrote 3 original loops for bass, lead, and vibe, and used a virtual drummer from GarageBand to produce the beat. I laid these elements out with cubes representing them on a vaguely sci-fi theme.

The player begins in the center of the room, and kicks off the audio experience by using gaze interaction with any of the cubes. Selecting a cube starts the audio engine. Audio is 3D spatial, with each cube having its own sound source. While all 4 audio clips are loaded and scheduled in the engine, only the selected cube’s audio is unmuted. This way, when the user selects another cube in the main room, its audio is immediately accessible. Selecting a cube that is already playing results in that cube’s audio being muted.

System Behavior

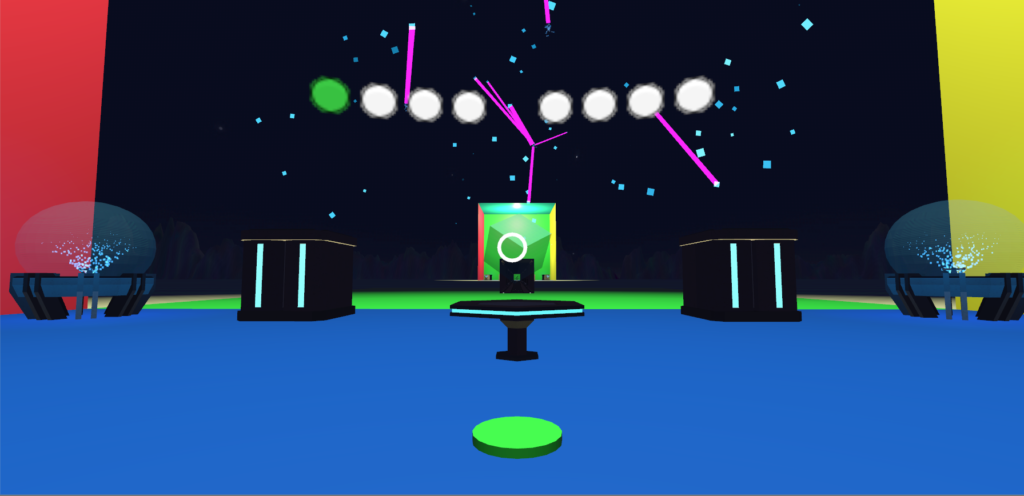

When a user selects a cube and begins to play the audio, an animation shows the wall beyond it lowering to the ground. The outside world shows a corridor which leads to another node (room). A button also becomes available on the floor, color coordinated to match the theme of the cube. The player can select this button using the standard gaze interaction. Upon selecting the button, the user is transported to the corresponding “outpost” node at high speed via the corridor.

Each node offers the opportunity for the user to exchange the loop for a loop of similar theme (i.e. a beat for a beat). Indicators in a HUD help track which elements are selected and playing.

When in an outpost node, the non-related audio is essentially muted by the practical distance from the other sound sources. The context is different in the outpost nodes, and the underlying code architecture acts appropriately. When a new sound is selected for exchange, the audio is scheduled for the beginning of the next loop. Scheduling exchanged audio on the next loop avoids having the new audio loaded onto the CPU/ GPU until it is actually needed. Obviously, this is necessary for mobile VR if the concept is to be extended to include other nodes, or more audio choices are to be made available in each node.

Working Process

While I had the plan for the central node from the outset of the project, the outpost nodes on the cardinal directions came later. I originally intended for everything to take place in one room, but that quickly felt stifling. While it was admittedly cliché, I soon wanted to knock the walls down. Knocking the walls down offered a good opportunity to practice animations, particle effects, and a dash system for player movement. The most complicated problem came when solving an issue with audio not synchronizing properly on the first iteration in an outpost node, even though logging the time values of the audio indicated they were correctly entered into the system. After a lot of research, I finally realized that rather than a bug in my code, it was a logical problem. Since I was using spatial audio, and teleporting/dashing the transform of the audio sources, I needed to turn off the doppler calculations in the audio preferences pane of Unity itself. This destroyed a rather fun audio artifact during dashing between nodes, but fixed the timing issue.

Strengths

It’s fun. It works. It could easily be extended. Physically exploring a space to find and manipulate audio is much more satisfying than looking through a list of words meant to identify sounds in standard audio app or DAW.

Room for Improvement

Interactions with the objects in space could be made much more satisfying. For example, when exchanging related sound cubes, the transforms just immediately trade positions. There should instead be an animation and an effect. Also, there should be rhythmic pulsing and other indications when a new sound is about to begin playing.

Next Steps

If I decided to develop this into a full fledged app, I would include a community aspect. You should be able to travel to another user’s nodes in the game space and try out their audio. Since this is a BPM driven experience, a user could post all their different loops at different BPMs and the software could filter and only expose the nodes at the tempo a user was seeking (perhaps including BPMs at half or double speed, since those could also technically work). Ideally, there would also be some limited personalization of appearance of nodes, enough to give a vibe without distracting from the user experience.